This article was originally published on The Conversation.

Today, this dream is almost becoming reality with the Schopper project supported by the French National Research Agency. Five French partners are involved in this project: three laboratories(CERP-HNHP, CEROS and LIX) and two companies(Craft.AI and Immersion Tools) which together are creating innovative technological solutions applied to archaeological research.

This project has enabled us to develop a technology that generates the landscapes of the Tautavel valley, which was frequented by prehistoric man during contrasting climatic periods (glacial and interglacial) between 600,000 and 90,000 years ago.

The simulation is fed by climate parameters (temperature, humidity) obtained by machine learning models applied to past periods. It allows plant species to be positioned according to their ecological aptitudes and the animals that move and feed according to the resources available and their ethology.

Combined with the development of the entire valley in immersive 3D, the result now offers archaeological researchers the possibility of moving around the valley on a 1:1 scale in order to appreciate the relief of the terrain and the distances, the density of the vegetation cover, the areas where natural barriers were crossed, and the areas where animals gathered and passed. These are all important points of reference for understanding the mobility of hunter-gatherers. It is also possible to observe arrangements of flora whose pollens have been found fossilised in the cave, or to follow the evolution of the landscape.

54 years of excavations

The origin of this virtual reconstruction is "Schopper", a simulator that allows you to test hypotheses about the environment and behaviour of prehistoric man in an immersive reconstructed environment. The principle is first to learn archaeological data, then to formulate hypotheses on behaviour or on the environment, and finally to observe the mechanisms and impacts of these hypotheses in the reconstructed environment.

This simulator is the result of two interacting platforms.

The first is based on the database of the prehistory research laboratory in Tautavel, which is responsible for the excavation of the project's pilot site, the Caune de l'Arago. This Lower Paleolithic site of worldwide interest has yielded, among other things, the oldest human fossils on French territory.

Thanks to the work of the prehistorian Henry de Lumley, the CERP has built up a database that records 54 years of excavations using a structured methodology. It contains nearly 500,000 objects (animal bones, lithic industries, etc.), corresponding to about fifty different periods of occupation of the cave, as well as samples (sediments, pollen, etc.).

To exploit this database, Craft.AI, a start-up specialising in artificial intelligence (AI), has developed an engine for Schopper that allows scientific hypotheses to be tested. It is thus possible to query, for example, the duration of the periods of occupation of the cave, the function it had for people in the past, but also the climatic conditions.

The second platform is realized by the Immersion Tools team, specialized in the integration of innovative visual presentation tools. It offers archaeologists the possibility to interact in virtual reality, in immersion, with the database in the 3D modeled cave as shown in the animation below.

Each object is marked with a parallelepiped of a colour corresponding to its nature. Their spatial position at the time of their discovery during excavation, their orientation and their inclination are respected. Researchers have access to a range of tools enabling them to measure the distances between objects, display 3D scans or the grid, or move around by following body movements or by 'teleportation'.

Two approaches to training AI

To work, an AI tool needs to learn. In the case of supervised learning, as in the case of Schopper, it needs to be given 'labelled' data, associating for example a set of flora and fauna remains with a certain climate.

There are two major difficulties here in archaeology. Firstly, the volume of data is small. The data come from several academic disciplines and are therefore quite heterogeneous. Moreover, it is difficult to interpret: as no one was around 400,000 years ago to know whether it was hot or cold, it seems difficult to know under what climatic conditions a plant from which we have found a pollen fossil developed.

We therefore had to adapt the AI's training methods to the specific constraints of archaeology. The first training mode proposed in Schopper is thus based on "actualism": it consists in admitting that what is happening now is similar to what was happening a long time ago (in some cases). This allows us to access a larger volume of data by enriching prehistoric data with current data.

It is assumed, for example, that the reindeer hunted by the Tautavel man 450,000 years ago has the same ecology as the current reindeer. This is equivalent to the hypothesis that it lived in a relatively cold climate in arctic or subarctic regions. The holm oak, whose pollen grains have been collected in certain levels of the Arago Canyon, should remain typical of the current Mediterranean range, thermophilic and drought-resistant.

For fauna, we refer in particular to a large WWF database listing vertebrate species in all the world's ecoregions. These represent so many data points that feed the learning process by associating the characteristics of their environment with the animals. These can be the terrestrial biome, an average annual temperature value, or a total rainfall in millimetres over the year.

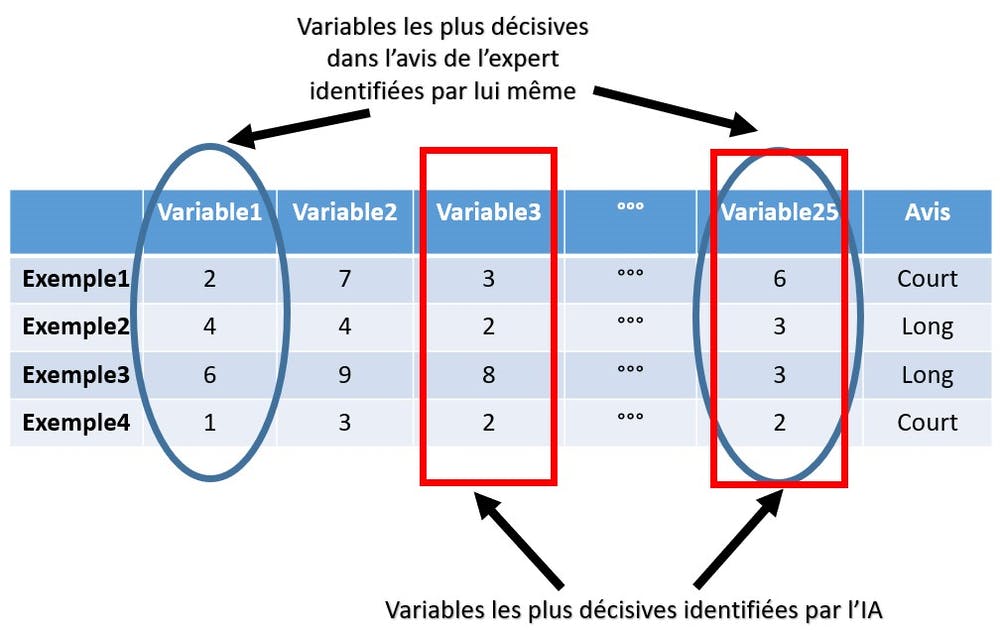

The second method used is based on "expert opinion". An archaeologist, depending on his or her speciality, will, for example, deduce from a set of data that people at a certain date had only briefly resided in the cave.

The AI then interrogates the same elements to identify those that it believes led the researcher to give this opinion. The algorithm may also deduce that the variables that were decisive in the final decision differ from those stated by the expert in his articles.

Use of models

Once the data has been prepared, a series of round trips is undertaken to identify the optimal parameters. This is interspersed with validation steps to determine the quality of the model's learning and its generalisability. In this sense, machine learning follows the Ockham's razor principle where a simpler model is preferred to an overly complex explanation.

Finally, the models are applied to understand the biome, the type of climate, the temperature, the amount of precipitation or the duration of occupation and the function of the site in the region of the Caune de l'Arago and at different times.

Explanatory algorithms such as SHAP are also used to understand how one model leads to a decision and not another. This allows archaeologists who are not experts in machine learning to understand the decision-making processes involved in the models they use.

It now remains to deepen the model's treatment of the behaviour of our ancestors. Unfortunately, this comes up against the difficulties of establishing solid learning references with little data on such ancient periods. The project consortium is nevertheless working on new technical avenues to improve AI performance and add immersion through sound. This will be a continuation of Schopper's developments.

This paper was written with Philippe Carrez, founder of Immersion-Tools, and Matthieu Boussard, Research and Development engineer at Craft AI, two partners of the Schopper project.

.jpeg)

.webp)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)